The AI Readiness Gap in Field Service

.png)

At Field Service Next West in San Diego last week, we co-led a workshop with Phil Ammon, Director of Service and Standards at Hach, and polled roughly 100 field service leaders in the room on where they stand.

Two-thirds of field service leaders said they haven't started deploying AI yet. Not evaluating. Not piloting. Just...not started. Though most leaders have seen the demos and understand the urgency, they’re still stuck. The gap between knowing AI matters and actually deploying it is wide, and the obstacles are more practical than philosophical.

Here's our recap covering the sharpest lessons from organizations like Hach that have moved from awareness to action: what they measured, how they handled data, where deployments actually break down, and what to do before you talk to a single vendor.

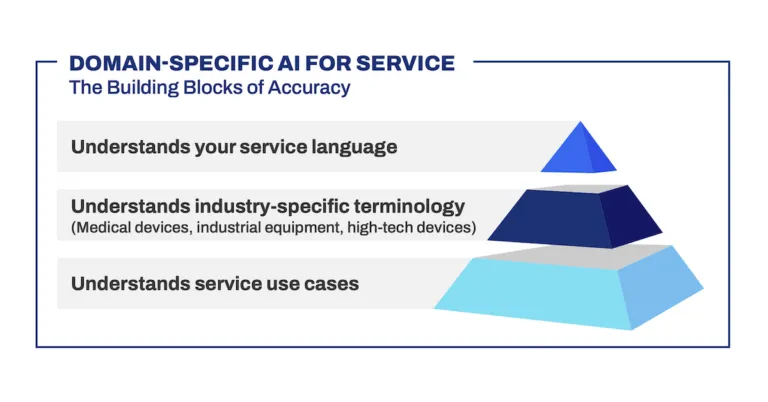

Why does your AI readiness problem feel like a technology gap, when it's actually a knowledge gap?

Most field service organizations have invested heavily in two things: capturing what happened (your FSM platform, your case data, your resolution codes) and storing what someone decided to document (your knowledge base, your manuals, your articles).

What almost none have built is a system of expertise: the diagnostic reasoning your best engineers developed over decades, the pattern recognition that lets a 20-year veteran identify a bearing failure before the fault code fires. That institutional knowledge lives nowhere except inside someone's head, and it leaves when they do.

The traditional response to knowledge loss has been documentation. Pull your best SMEs, put them in a room, have them write articles. Phil Ammon's team at Hach took a different approach, and the reason is instructive. With roughly 300 instrument types across 20 to 30 different technologies supporting wastewater and drinking water municipalities, Hach couldn't afford to turn their domain experts into knowledge managers. Instead, they built capture into the flow of work: pre-visit briefings, post-case summaries, the structured notes a technician enters before closing a ticket. Expertise gets captured as a byproduct of work that was already happening.

The strategic implication goes beyond efficiency. Organizations that build a genuine system of expertise aren't just making their current workforce more productive. They're creating an institutional asset that compounds over time and survives turnover. That reframe changes the ROI conversation entirely, and it's the foundation that makes AI actually work in field service contexts.

Which metrics actually get AI investment approved in field service?

The metrics field service leaders care most about are not the ones that get budgets approved. In live polling, 83% of attendees said customer satisfaction was their primary goal for AI. But when asked which metrics they planned to use to justify investment, the top answers were technician productivity, first-time fix rate, and reduced repeat truck rolls.

That gap matters because CSAT is a downstream outcome; slow to move and hard to attribute to a single initiative. A CFO evaluating an AI investment needs a direct line between spend and savings. Time-to-close and first-time fix rate provide that line. Each repeat truck roll has a measurable cost in labor and dispatch, and both metrics connect directly to AI-assisted resolution.

The practical approach: build your business case around operational efficiency metrics even if your real motivation is customer experience. When time-to-close drops and first-time fix rate improves, CSAT follows as a natural consequence. You get the customer story for free once the cost story holds.

Does your data need to be clean before you can deploy AI in field service?

No — but data quality determines how far your deployment can go. Understanding what you're working with before you start is the key.

What Hach did before selecting a vendor was map their data landscape. Salesforce and ServiceMax held case management and field work orders. Brandfolder held operator manuals, service manuals, and white papers; documentation created when equipment was built and, in many cases, never updated since. Understanding where data lived, in what format, and across which product lines gave them something most organizations lack going into vendor conversations: an honest picture of what an AI would actually be working with.

The more important constraint is accuracy. AI systems in field service need to operate at 90% accuracy or higher to generate real productivity gains. Below that threshold, technicians start second-guessing recommendations, and you've added a tool that increases cognitive load rather than reducing it. Getting there requires ongoing investment in metadata structure and data linkage across systems. That work doesn't end at deployment. That's precisely when it needs to become structural.

Why do field service AI deployments fail after a successful pilot?

Field technicians don't resist tools that make their jobs easier. They abandon tools that add steps. The difference between a deployment that sticks and one that quietly dies is whether AI surfaces insights inside the interfaces technicians already use — their dispatch screen, their pre-trip brief, their case management workflow — or whether it requires opening a new app, creating a new login, or remembering to check a separate system.

Phil Ammon's team at Hach designed around a simple principle: every technician needs a personal win. Not a departmental win. Not a CSAT improvement. Something that made their specific job easier today. A technician who arrives on site with a pre-populated briefing showing the most likely failure modes for that customer's equipment didn't have to do anything to get that value. That frictionless experience is what drives adoption without a change management campaign.

There's a harder dimension worth acknowledging. Frontline technicians often worry AI will eliminate their roles. The honest response isn't vague reassurance — it's a clear statement of intent. The goal isn't headcount reduction. As service volume grows, AI handles high-frequency repetitive resolution work, freeing human experts for complex cases, escalations, and judgment calls that AI can't make. The role becomes more valuable, not more vulnerable. That message needs to be delivered directly, not left to rumor.

How do you make sure your field service AI keeps getting smarter after go-live?

The organizations that get lasting value from AI in field service are the ones that treat the model as something that needs to be continuously improved, not a product you configure once and deploy. That requires someone owning the feedback loop from day one.

At Hach, the escalation team, senior engineers who already handled complex cases, took on a new standing responsibility after deployment: reviewing cases where technicians flagged an AI response as incorrect or incomplete, correcting the knowledge base, and feeding those corrections back into the model. The role didn't require a new hire. It was an evolution of work already happening.

The structural implication is significant. When the people closest to where the AI gets things wrong are the same people responsible for fixing it, the knowledge investment compounds continuously. Organizations that build this loop into their deployment from day one build a durable advantage. Organizations that skip it build a system that plateaus.

What should field service leaders do before evaluating AI vendors?

For most field service organizations, the biggest obstacle to AI adoption isn't finding the right vendor. It's the internal process that has to happen before a vendor conversation can go anywhere. Procurement review, legal signoff, IT security assessment, executive alignment — in large organizations that sequence can take six months or more. The leaders who navigate it well do three things before they start evaluating solutions.

First, map your data. Not clean it. Map it. Understand where your data lives, what format it's in, and what it would take to connect it across systems. This work costs nothing but time, makes you a significantly better buyer, and surfaces the data gaps that would otherwise surprise you mid-deployment.

Second, build your business case around operational metrics. Time-to-close, first-time fix rate, truck roll cost; the numbers that connect directly to spend and savings. Customer satisfaction belongs in the conversation, but it can't carry the business case on its own.

Third, pre-align your stakeholders before the formal process starts. The worst outcome in an AI investment cycle is a CEO seeing a spend request for the first time under deadline pressure. The best outcome is a decision that feels obvious to everyone at the table because they've been part of the conversation for months.

How do I get started?

The window for getting ahead of this is still open. Two-thirds of field service leaders haven't started yet, which means the organizations that move deliberately now with the right data foundation, the right internal alignment, and the right metrics will have a meaningful head start on the majority of their peers.

The through line across every insight in this session is the same: AI in field service succeeds when the conditions for it are built intentionally.

- A system of expertise that captures knowledge in the flow of work.

- A business case built on operational metrics that finance actually responds to.

- Deployments designed around the technician's daily interface, not around a separate tool they have to remember to use.

- And a feedback loop that makes the system smarter every week after go-live.

The technology is ready. The harder work is organizational. Start there.

Book a demo to see how field service leaders are closing the AI readiness gap with purpose-built resolution intelligence.